And why the people responsible for fixing it are often the ones preventing it

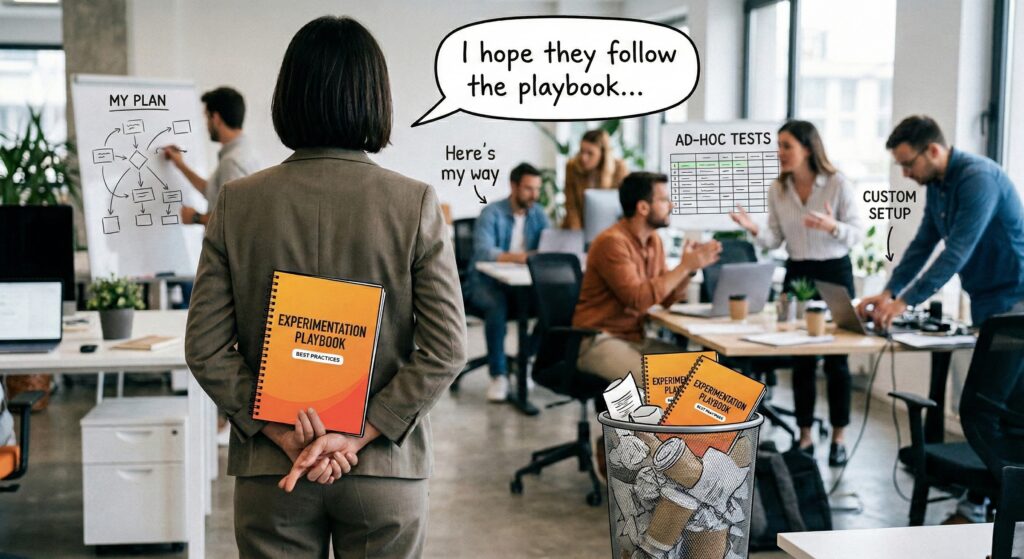

Most organisations run their experimentation programmes on hope.

Hope that teams will follow the playbook. Hope that insights will be shared. Hope that someone will connect experiments to strategy.

Working with experimentation teams since 2015, this is the pattern I have watched repeat across hundreds of organisations. A company invests in testing tools, hires specialists, maybe even writes a playbook. Then they hand it all over and wait.

No enforcement. No quality gates. No system to ensure any of it actually happens.

Just hope.

The pattern I could not unsee

This is what killed me in the early days of building Effective Experiments. I would work with brilliant teams running sophisticated A/B tests who could not tell me what they had tested last quarter.

One retail organisation had unknowingly run the same checkout page test sixteen times across different teams. Another could not recall which variation actually went live after a successful experiment. A financial services company lost an entire quarter of testing data when a team member left.

These were not junior teams. These were well-funded programmes with experienced specialists, expensive tools, and executive sponsorship. They had everything they needed except the infrastructure to hold it all together.

The problem was never the quality of the experiments. It was the complete absence of operational infrastructure around them.

So we built that infrastructure. And something unexpected happened.

The resistance never came from where I expected

The more governance capability we gave teams, the more a certain type of person resisted it.

Not leadership. Leadership wanted visibility, accountability, and strategic alignment. When we showed executives what was actually happening in their programmes, they were often shocked. Tests running with no hypothesis. Experiments colliding with each other. Insights disappearing into slide decks that three people read before they vanished into shared drives.

The resistance came from the middle layer. The enablement leads. The programme managers. The people whose job it is to ensure experimentation works properly were the first to push back against the systems that would make it work properly.

And the objection was always the same.

| That is a lot of clicks. |

I hear this constantly. The people responsible for enablement will tell you they want standardisation, cross-team learning, and executive visibility. They will nod along in every meeting about experimentation maturity.

But when it comes to actually building that infrastructure, they optimise for the path of least resistance. Because they lack the authority to mandate adoption, they default to whatever creates the least friction with their teams. And the least friction is always doing nothing.

What “that is a lot of clicks” actually means

Let me translate the objection:

| I cannot convince my team to do this, and I would rather lower the standard than have the difficult conversation about why discipline matters. |

Consider what those “extra clicks” actually represent. A structured hypothesis. Metrics aligned to business objectives. A decision protocol agreed before the test runs. A quality gate that catches poor experiments before they waste traffic and time.

Now consider what “two clicks” actually represents. Someone opens the testing tool, creates an experiment, and runs it. No one checked whether the hypothesis was sound. No one knows if another team tested the same thing last quarter. No one agreed what would happen with the results before the test started. And after it finishes, the insights live in a slide deck that three people see before it disappears into a shared drive.

The invisible forty-five minutes

The two clicks are real. But they are the visible tip of a much larger workflow that nobody tracks.

Before those two clicks, someone had an idea. They discussed it in Slack, or Teams, or email. They built a case, sometimes in a spreadsheet, sometimes in a presentation, sometimes verbally in a meeting that was never documented. They tried to check whether anything similar had been tested before, which meant asking around or searching Slack history or hoping someone remembered. They discussed prioritisation with their pod lead, maybe using a framework, maybe on gut feel. They agreed on success criteria, possibly in writing, probably not.

After those two clicks, the experiment runs. Results come in. An analyst reviews them, eventually. Someone creates a presentation. It gets shared in a meeting. Leadership asks for an aggregate view. Someone spends days stitching together data from multiple tools. Learnings are stored somewhere, maybe in the testing tool, maybe in a wiki, maybe nowhere.

That is not a two-click process. That is a forty-five minute workflow spread across five different tools, multiple communication channels, and at least one meeting that produced no documented output.

The clicks they want to avoid are the ones that would replace all of that hidden mess with something structured.

Hope-based governance: a definition

This is what I call hope-based governance. The organisation has a playbook. It has principles. It might even have a centre of excellence with a name, a Slack channel, and quarterly presentations to the board.

But there is no system ensuring any of it is followed. The entire programme depends on individuals choosing to do the right thing, every time, without enforcement or accountability.

The hallmarks are easy to spot:

- The playbook exists but compliance is never measured

- Quality standards are defined but nothing prevents substandard experiments from running

- Cross-team learning is encouraged but no infrastructure makes it possible

- Executive reporting happens but requires weeks of manual aggregation

- Knowledge management is valued in principle but depends entirely on individual motivation

Each of these is a hope. And every hope is a point of failure that the organisation has chosen to leave unaddressed.

The enablement trap

Here is the uncomfortable truth: the people responsible for enablement are often the biggest obstacle to it.

Not because they are incompetent. Because they are trapped.

They have the title but not the authority. They can recommend but not require. They can design the playbook but not enforce it. They can see exactly what needs to change but cannot mandate the change without risking pushback from the teams they depend on for cooperation.

So they optimise for survival rather than standards.

They position themselves as facilitators rather than governors. They avoid mandates because mandates create conflict. They accept voluntary adoption because it is easier to manage than mandatory compliance. And when someone proposes infrastructure that would enforce what the playbook recommends, they instinctively calculate the adoption friction rather than the governance value.

The result is a programme that looks mature on paper but operates on hope in practice. Leadership thinks governance is in place because someone wrote a playbook. Practitioners know the playbook is optional because nobody checks. And the enablement lead sits in the middle, managing expectations in both directions while nothing actually changes.

This is not a personal failing. It is a structural one. When organisations give someone the responsibility for experimentation governance without the authority to enforce it, they have designed a system that can only run on hope.

What hope-based governance looks like at scale

If you think this only happens in immature programmes, consider this.

One of the largest financial services companies in the world has a dedicated Centre of Excellence for experimentation. A team of five people. Executive sponsorship from SVP level. Templates for experiment documentation. Maturity models to categorise teams. Analytics partners embedded in product teams. Dedicated sessions where senior leaders review experimentation results.

By any standard measure, this is a mature programme. It has budget, headcount, leadership attention, and years of operational experience.

And yet, when you look underneath, the entire system runs on hope.

Their quality control is a person joining a call. When that person is unavailable, experiments run unchecked. Their templates have mandatory fields, but nothing prevents someone from entering a weak hypothesis and pressing submit. They are contemplating a quality score but have spent years debating what to do with it. Their knowledge base is a collection of templates that people fill in with varying levels of rigour, and the team leader openly acknowledges that they cannot verify whether someone has changed their primary metric after the experiment started.

When I asked what happens when someone disregards the guidelines, the answer was honest: “We hope they don’t.”

When I asked whether they had the authority to stop a substandard experiment from running, the answer was equally honest: they do not.

This is not a team that lacks commitment. The programme lead described his early days in the Centre of Excellence as exhausting, spending every day reviewing experiments, coaching teams, and trying to maintain standards across an organisation with tens of thousands of digital properties across dozens of markets. He was, in his own words, dying every single day under the weight of it.

The problem was not effort. It was architecture. The entire governance model was built on human review, personal relationships, and trust that mature teams would do the right thing. There was no system that made governance automatic. No infrastructure that caught problems without a person being present. No mechanism that scaled beyond the capacity of five people to attend calls and write notes.

They even acknowledged that the word “governance” itself was toxic within the organisation. Teams resisted it. So instead of building governance infrastructure, they built relationships and called it “mutual bonding.” They became friends with the teams they were supposed to govern, because friendship was the only leverage they had.

This is hope-based governance at its most sophisticated. It has every ingredient except the one that matters: a system that works when the humans are not watching.

Why we built Efestra

After nearly a decade of watching this pattern, it became the reason we evolved from Effective Experiments into Efestra.

In the early years, we built a better place to document experiments. That helped. Teams could finally see their history, reference past learnings, and demonstrate their work to stakeholders. But documentation is not governance. A well-organised filing cabinet does not prevent bad experiments from running.

The more we worked with enterprise programmes, the clearer the picture became. The organisations that struggled were not lacking tools or talent. They were lacking infrastructure that made governance automatic rather than aspirational.

The industry kept treating governance as a culture problem. Run workshops. Write playbooks. Build a centre of excellence. Hope people follow through.

We built Efestra on the opposite principle.

| Infrastructure does what hope cannot. |

When the system requires a structured hypothesis before an experiment can proceed, you do not need to hope people will write one. When insights are captured automatically rather than relying on analyst motivation, you do not need to hope someone will document them. When collisions are detected by the system rather than by chance conversations, you do not need to hope teams will coordinate. When decision protocols are registered before a test runs, you do not need to hope someone will remember what was agreed.

That is not adding clicks. That is replacing the forty-five minutes of scattered, undocumented, unreliable work with five minutes of structured, enforceable, visible process.

What moving beyond hope looks like

The organisations that move beyond hope-based governance share one characteristic. Leadership decides that experimentation discipline is non-negotiable.

Not because they distrust their teams. Because they recognise that relying on individual motivation at scale is not a strategy. It is a gamble.

In finance, you do not hope people will follow spending policies. You build systems that enforce them. In engineering, you do not hope people will write tests for their code. You build pipelines that require them. In compliance, you do not hope people will follow regulations. You build controls that make non-compliance impossible.

Experimentation is the only function where organisations still believe that a playbook and a positive attitude are sufficient governance. Where the operating model is: hire smart people, give them tools, write down some guidelines, and hope for the best.

It works when you have five people running experiments. It breaks when you have fifty. It is catastrophic when you have five hundred, spread across multiple teams, using different tools, with different standards, answering to different stakeholders, and sharing nothing.

The signal you should not ignore

Every “that is a lot of clicks” objection is a signal. Not that the governance is too heavy. That the organisation has not yet committed to making experimentation a real capability rather than a well-funded hobby.

If your enablement lead is optimising for practitioner convenience over programme integrity, the problem is not the enablement lead. The problem is that nobody gave them the authority to do their job properly. And until someone does, the programme will continue to run on hope.

Hope is not governance. Systems are.

There is a different way for organisation who value evidence-based decision making. Learn more at https://efestra.com