Learning Center • 1-15 Minutes • Foundation Level

Understanding The Evidence Gap

The disconnect between generating evidence through experiments and research and using that evidence to inform the strategic decisions that matter. The Evidence Gap is the reason organisations invest heavily in experimentation and research but struggle to show how those investments shape business outcomes.

What is the Trust Gap?

What is the Evidence Gap?

The Evidence Gap is the space between the evidence organisations generate through experiments, research, and customer data and the strategic decisions that evidence should inform. It is not a single failure point. It is a systemic infrastructure problem that spans three distinct layers: the trustworthiness of the evidence itself, the ability to connect evidence across teams and time, and whether that evidence actually reaches the people making strategic decisions.

Most organisations assume their problem is generating better evidence. They invest in more sophisticated testing tools, hire more analysts, and run more experiments. But the gap rarely sits at the point of evidence generation. It sits in what happens after the evidence is created.

The result is a growing disconnect between the investment organisations make in evidence generation and the strategic value they extract from it. Teams run hundreds of experiments per year, produce thousands of research insights, and generate mountains of customer data. Yet leadership cannot confidently point to how those investments have shaped the decisions that matter.

Key Insight

The Evidence Gap is not about generating better evidence. It is about the missing infrastructure that ensures evidence is trustworthy, connected across the organisation, and carried into the strategic decisions it should inform.

What is the Trust Gap?

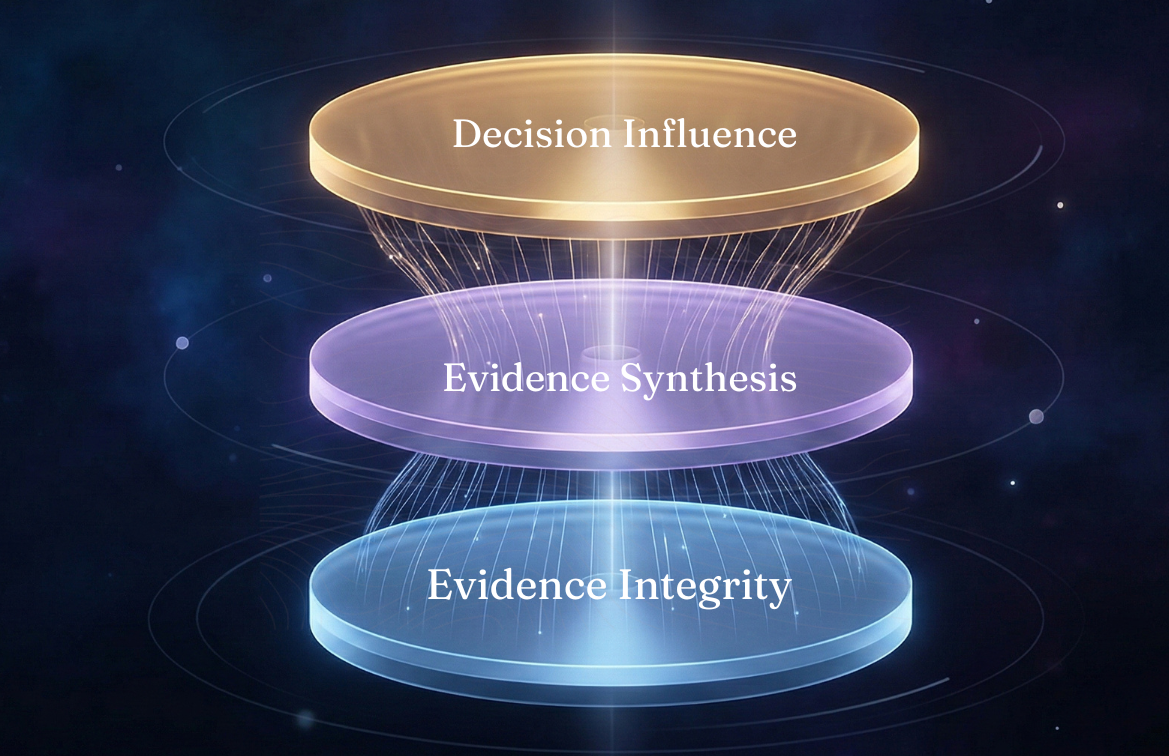

The Decision Intelligence Stack

Understanding where the Evidence Gap exists in your organisation requires a framework for diagnosing which layers are failing. The Decision Intelligence Stack defines the three layers of infrastructure required to close the gap, with governance running through each as connective tissue.

Layer 1: Evidence Integrity

Can we trust what we have generated?

This layer ensures experiments are designed properly, free from collisions, methodologically sound, and that results are interpreted correctly. It covers research design quality, appropriate sampling, and conclusions that are genuinely supported by the data. Without this layer, every decision built on top is compromised. This is where the Trust Gap originates: the disconnect between what results appear to show and what leadership can confidently act upon.

Layer 2: Evidence Synthesis

Can we connect what we know across teams, studies, and time?

This layer connects evidence across experiments, research studies, teams, and time periods. It makes past evidence discoverable, prevents insight decay and duplicate work, and builds cumulative knowledge rather than isolated data points. This is the layer almost nobody in the industry addresses. Most organisations generate evidence in silos. Experiments live in testing tools. Research lives in slide decks. Customer data lives in analytics platforms. None of it is connected, and insights that could compound are lost the moment the project concludes.

Layer 3: Decision Influence

Does trustworthy, synthesised evidence actually reach the decisions that matter?

This layer ensures decision protocols are defined before experiments launch, that evidence is visible to decision-makers, that executive dashboards show decision influence rather than activity metrics, and that there is accountability for acting on evidence. Without this layer, even trustworthy, well-connected evidence never reaches the boardroom. The most rigorous experiment in the world delivers zero strategic value if the insight never influences a decision.

Governance as Connective Tissue

Governance is not a separate layer. It runs through all three as the mechanism that makes each function as infrastructure rather than aspiration. At Layer 1, governance is quality assurance. At Layer 2, governance is knowledge standards. At Layer 3, governance is decision accountability. When governance works, it is invisible. Teams do not feel governed. They feel supported.

Symptoms & Indicators

Symptoms & Indicators

Organisations experiencing the Evidence Gap often exhibit clear symptoms across all three layers. These symptoms may appear as isolated problems, but they are usually interconnected indicators of missing infrastructure.

Layer 1 Symptoms: Evidence Integrity

Implementation Failures

Ensures consistent methodology, statistical rigor, and reliable results across all experiments.

Executive Skepticism

Leadership questions the reliability of experiment results and hesitates to make strategic decisions based on test data.

Methodology Inconsistency

Different teams use different statistical standards, success definitions, and quality thresholds, making it impossible to compare results across the programme.

Vanity Metrics Focus

Teams celebrate test velocity and win rates while struggling to demonstrate actual business impact.

Symptoms & Indicators

Layer 2 Symptoms: Evidence Synthesis

Insight Decay

Research and experiment findings that were valuable six months ago are no longer discoverable, forcing teams to re-run studies or make decisions without the evidence that already exists.

Duplicate Work

Teams unknowingly repeat experiments or research studies that have already been conducted elsewhere in the organisation, wasting resources and time.

Knowledge Silos

Insights from experiments remain trapped within teams, leading to duplicated efforts and lost learnings.

Symptoms & Indicators

Layer 3 Symptoms: Decision Influence

Strategic Disconnect

Experiments and research focus on tactical metrics rather than strategic outcomes, with no clear connection between evidence generated and the business decisions it should inform.

Invisible ROI

The experimentation programme cannot demonstrate its strategic contribution, making it vulnerable to budget cuts during cost reduction exercises.

Language Gap

Practitioners speak in statistical significance and conversion rates while executives speak in revenue, market share, and strategic priorities. Evidence never crosses this translation barrier

Warning Sign

If your organisation can run experiments but cannot show the board which strategic decisions those experiments influenced, you have an Evidence Gap. The problem is not the quality of your team or the sophistication of your tools. It is the absence of infrastructure connecting evidence to decisions.

Root Causes

Root Causes of the Trust Gap

The Trust Gap emerges from systemic issues in how organizations approach experimentation, not from individual failures or lack of effort.

1. The Frankenstack Problem

Organizations cobble together disconnected tools—Airtable for tracking, Jira for implementation, Notion for documentation, Google Sheets for reporting. This fragmentation creates:

- No single source of truth for experimentation data

- Inconsistent methodology across teams

- Lost insights between tool handoffs

- Inability to track implementation outcomes

2. Methodology Inconsistencies

Without proper governance, teams develop their own approaches to experimentation:

- Variable statistical standards across teams

- Inconsistent test duration and sample size decisions

- Different definitions of “success”

- No quality control on experiment design

3. Strategic Misalignment

Experimentation programs often operate in isolation from strategic business objectives:

- Tests focus on tactical metrics rather than strategic outcomes

- No clear connection between experiments and OKRs

- Practitioners speak a different language than executives

- Limited visibility into how tests support business goals

4. Implementation Accountability Gap

The handoff from experiment to implementation often lacks proper governance:

- No tracking of whether winning variants were actually implemented

- Changes made during implementation that invalidate test results

- No validation that implemented changes delivered expected value

- Lack of feedback loop to improve future experiments

5. The Infrastructure Gap

Every player in the experimentation ecosystem is incentivised to optimise their piece rather than build the whole system. Testing platforms help teams run experiments but have no mechanism for synthesis or decision influence. Research tools help teams organise findings but only for one type of evidence. BI tools surface data beautifully but a dashboard showing conversion rate over time is not decision influence. Agencies generate evidence but have no incentive to build the infrastructure that would reduce their clients’ dependency on them.

Business Impact

The Business Impact of the Evidence Gap

The Evidence Gap creates significant costs and missed opportunities that compound over time, undermining the strategic value of evidence generation across the organisation.

42% Average implementation failure rate for "successful" experiments

1.2M% Average annual waste from failed implementations

68% Experiments disconnected from strategic objectives

3.2% Duplicated experiments due to knowledge silos

"We were running over 200 experiments a year, but when we looked closer, we realized only about 15% of our product decisions were actually being guided by reliable experimentation data. The rest was essentially informed guesswork wearing a lab coat."

Hidden Costs of the Trust Gap

-

Resource Waste

Teams spend months on experiments that never influence decisions or duplicate previous work. -

Opportunity Cost

Conservative decision-making due to lack of trust in experimental data slows innovation. -

Programme Vulnerability

When budget cuts arrive, experimentation programmes that cannot demonstrate strategic influence are the first to be reduced. Without evidence of decision influence, the programme is seen as a cost centre rather than a strategic capability. -

Team Morale

Practitioners become demoralized when their work doesn't influence business decisions. -

Competitive Disadvantage

While competitors make confident, data-driven decisions, organizations with trust gaps fall behind.

Diagnosing Your Gap

Diagnosing Your Trust Gap

Understanding the severity of your organization’s Trust Gap is the first step toward addressing it. Use these diagnostic questions to assess your current state.

Quick Trust Gap Assessment

1. What percentage of your successful experiments are fully implemented as tested?

Red flag: Less than 60% | Warning: 60-80% | Healthy: Above 80%

2. Can you track the business impact of experiments 3-6 months after implementation?

Red flag: No tracking | Warning: Partial tracking | Healthy: Comprehensive tracking

3. How often do teams unknowingly duplicate previous experiments?

Red flag: Frequently | Warning: Occasionally | Healthy: Rarely or never

4. Do executives regularly reference experiment results in strategic decisions?

Red flag: Never | Warning: Sometimes | Healthy: Consistently

5. How many different tools do you use to manage your experimentation program?

Red flag: 4+ tools | Warning: 2-3 tools | Healthy: Unified platform

Get Your Complete Trust Gap Assessment

Our comprehensive Experimentation Confidence Quotient (ECQ) assessment provides a detailed analysis of your Trust Gap across five critical dimensions.

Closing the Gap

Closing the Trust Gap

Addressing the Trust Gap requires more than better tools or processes—it demands a fundamental shift in how organizations approach experimentation governance.

The Learning Loop Framework

Closing the Trust Gap requires implementing a complete Learning Loop that connects experimentation to strategic decision-making:

1

Strategic Direction

2

Governance & Structure

3

Knowledge Creation

4

Implementation & Action

5

Organizational Learning

Key Elements of Trust Gap Resolution

Governance Framework

Establish clear standards for experiment quality, methodology consistency, and decision protocols that ensure reliable results.

Strategic Alignment

Connect every experiment directly to business objectives, ensuring tests contribute to meaningful organizational outcomes.

Executive Visibility

Provide leadership with clear dashboards showing how experiments drive strategic outcomes, building confidence in the program.

Knowledge Preservation

Build institutional memory that captures and surfaces insights, preventing duplicate work and enabling compound learning.

Success Story "Experimentation governance is not about adding bureaucracy—it's about creating the structure that enables experimentation to reach its full strategic potential."

Additional Resources

Additional Resources

The Learning Loop Framework

Discover the complete framework for creating a continuous cycle of strategic experimentation and organizational learning