How we work with you

What Flywheel Does

The complete experimentation & research governance platform. One system that replaces scattered trackers, enforces evidence quality, and connects experiments to the decisions they should inform.

Layer 1: Evidence Integrity

Can you trust the evidence your teams generate?

Before evidence can influence decisions, it has to be trustworthy. Layer 1 ensures that experiments are methodologically sound and research is properly documented.

Without this layer, everything built on top is unreliable.

Governance enforcement

Every experiment requires a hypothesis before launch, a primary metric before it runs, and a decision rule before results are read. Required fields and approval workflows ensure nothing skips the process. Research studies require documented objectives, methodology, and stakeholder connections.

-

Required fields prevent experiments launching without hypotheses or success criteria

-

Approval workflows route experiments through the right review process

-

Research documentation standards ensure consistent methodology across studies

-

Full audit trail on every change, visible to leadership

Quality oversight

Flywheel detects the governance violations that your current tools cannot see. Post-hoc hypothesis changes, metric drift mid-experiment, tests stopped before reaching significance, and documentation added retrospectively. These are the patterns that make evidence unreliable.

-

Hypothesis modification detection: flags any change after an experiment goes live

-

Metric drift monitoring: alerts when the primary success metric is swapped

-

Retroactive documentation detection: identifies records created after completion

-

Collision detection: prevents concurrent experiments from contaminating each other

Workflow management

The operational layer that moves experiments and research studies through their lifecycle. From intake and prioritisation through design, execution, analysis, and decision. Every step is governed without being bureaucratic.

-

Experiment pipeline from idea to decision, with stage-gate governance

-

Research study workflow from commission to synthesis and action

-

Custom statuses and workflows that match how your teams actually operate

-

Notification and assignment system for reviewers, decision owners, and stakeholders

Layer 2: Evidence Synthesis

Can you connect what you know across teams and time?

Most organisations generate far more evidence than they use.

Studies sit in shared drives. Past experiment results decay in archived tickets. Different teams answer the same questions without knowing it.

Layer 2 turns isolated findings into connected, decision-ready knowledge.

Research synthesis

AI-assisted synthesis turns weeks of analysis into certified briefs. Cross-study analysis identifies patterns that no single study could reveal. Researchers verify accuracy, add nuance, and certify findings before they reach decision makers

-

AI drafts synthesis from multiple studies in minutes, not weeks

-

Cross-study analysis reveals patterns across experiments and research

-

Human verification ensures AI-generated insights are accurate and nuanced

-

Certified briefs delivered in a format decision makers can act on immediately

Institutional memory

Every experiment result and every research finding is preserved, searchable, and connected to what came before it. When someone leaves, the knowledge stays. When a new team member joins, they can find everything the organisation has learned without asking.

-

Search across all experiments, research studies, and syntheses

-

Automatic linking between related studies and experiments

-

"Has this been tested before?" detection prevents duplicate work

-

Timeline view shows the complete evidence history for any product area

Layer 3: Decision Influence

Does trustworthy, synthesised evidence actually reach the decisions that matter?

This is the layer that makes evidence-based decision making real, not aspirational.

Layer 3 connects evidence to the people making strategic decisions and tracks whether those decisions were actually influenced by what your teams produced.

Strategic alignment

Every experiment and research study connects to a strategic objective and a decision owner before it begins. This is not tagging. It is a structural connection that ensures evidence is generated with a decision in mind, not as an activity without purpose.

-

Map experiments and research to business objectives and OKRs

-

Assign a decision owner to every study before it starts

-

Visualise coverage: which strategic priorities have evidence and which are blind spots

-

Prevent wasted effort on studies that are not connected to anything the business needs to decide

Decision tracking

When a strategic decision is made, Flywheel records which evidence informed it: which experiments, which research studies, which syntheses. This creates a provenance trail from insight to action. You can trace any decision back to the evidence, and any evidence forward to the decision it influenced.

-

Log decisions with links to the experiments and research that informed them

-

Track implementation: was the decision acted on, and what happened?

-

Compare predicted impact (from the experiment) to actual business outcome

-

Researchers and experimenters notified when their work influences a decision

Executive dashboards

Leadership visibility without practitioner gatekeeping. Dashboards update automatically and show what executives actually care about: which strategic decisions were informed by evidence, whether governance standards are being met, and what the experimentation and research programmes are delivering in business terms.

-

Programme health: governance score, implementation rate, decision influence rate

-

Strategic coverage: which objectives have evidence and which are blind spots

-

Verified business impact: predicted vs actual, tracked over time

-

No manual reporting. The dashboard is the report.

The Infrastructure

The foundation everything runs on

Governance capabilities are only as good as the infrastructure beneath them.

These are the structural elements that make Flywheel reliable, auditable, and fit for organisations that take evidence seriously.

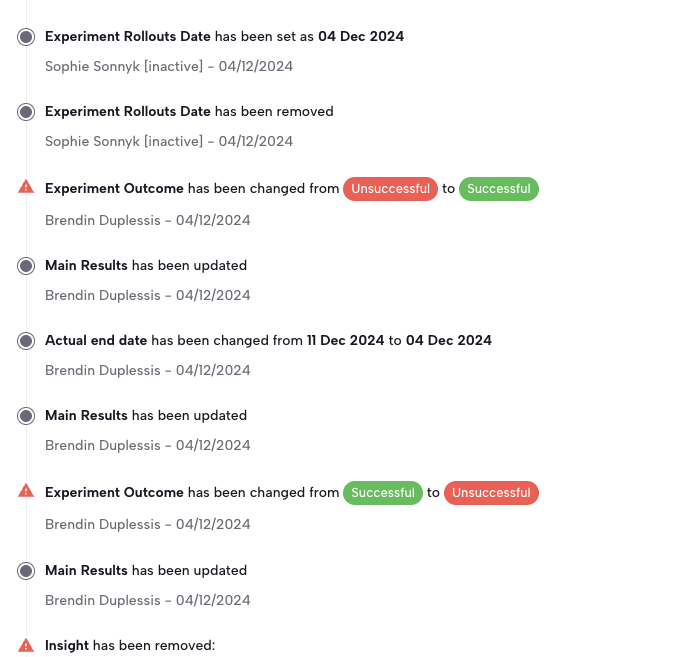

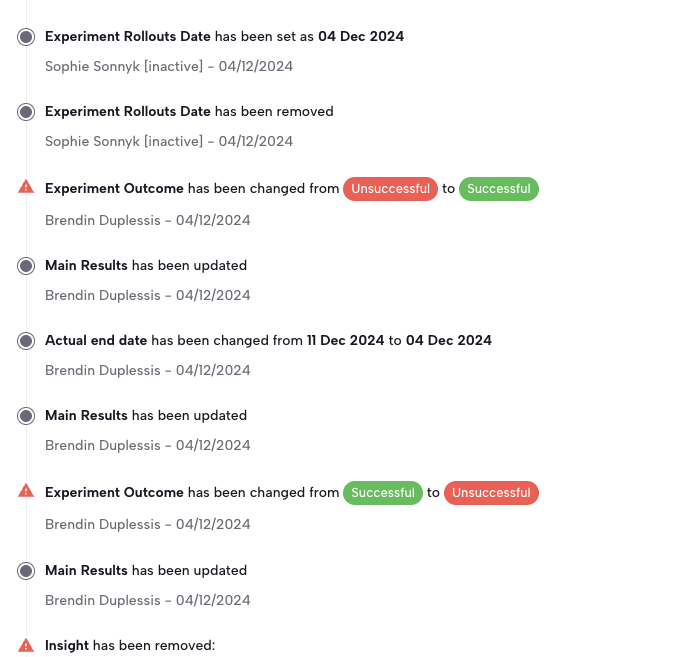

Tamper-proof audit trails

Every change to every experiment and research record is timestamped and attributed. Hypotheses, metrics, decision rules, results interpretations. Nothing can be modified without a permanent record of what changed, when, and by whom. When leadership asks whether the evidence is trustworthy, the audit trail is the proof.

-

Immutable change log on every field across all experiment and research records

-

Detects post-hoc modifications: hypothesis changed after results, metric drift during a test

-

Visible to executives without practitioner involvement

-

Exportable for compliance and programme reviews

Governance scoring

Every experiment receives a governance score based on how well it met quality standards: was the hypothesis documented before launch, was the metric stable throughout, was a decision rule pre-registered, was the result acted upon. The programme-level score gives leadership a single number that reflects evidence quality across the organisation.

-

Per-experiment governance score based on objective criteria

-

Programme-level score aggregated across all teams and time periods

-

Trend tracking shows whether governance is improving or degrading

-

Breakdown by failure type: missing hypotheses, metric drift, post-hoc changes, unimplemented wins

Roles, permissions, and approval workflows

Control who can create, approve, launch, and close experiments. Set up approval gates that match your organisation's structure. A junior analyst can draft an experiment; a senior lead must approve it before launch. An executive can view everything without touching anything.

-

Role-based access: viewer,user, admin, program managers & execs

-

Executive read-only access to dashboards and audit trails

-

SSO integration for enterprise identity management

People and process analytics

Scaling experimentation is not just about running more tests. It is about ensuring that quality does not degrade as more people and teams participate. People analytics shows you where process adherence is strong, where it is slipping, and where individuals or teams need support before bad habits become embedded practice.

-

Per-team and per-individual governance adherence scores over time

-

Identify which teams consistently skip governance steps and where training is needed

-

Track progression: are new team members improving their process compliance as they onboard?

-

Spot early warning signs: a team's governance score dropping signals intervention is needed before results become unreliable

-

Compare process maturity across teams, regions, or business units to allocate support where it matters most

Who Flywheel Serves

Different roles, different value

Flywheel is a single platform. But executives, specialists, and researchers each use it differently, and each get something distinct from it.

Executives - CPOs, CDOs, CFOs, CTOs & VPs

Decision accountability

Every experiment has a named decision owner and a pre-registered decision rule. When results arrive, there is a documented record of what was decided and why. No more claimed wins without evidence of implementation.

Programme visibility without asking

Executive dashboards show governance compliance, implementation rates, and verified business impact. You see the truth about your programme directly, not a curated highlight reel assembled by the team.

Defensible investment cases

When budget reviews arrive, Flywheel provides the data on which strategic decisions were informed by experimentation. The programme justifies itself with evidence, not promises.

Cross-team visibility

See what every team is testing, where experiments overlap, which objectives have evidence coverage, and which have blind spots. No more siloed reporting from each team.

Leads - Heads of Experimentation, CoE Leads, VP Optimisation, Research Ops Directors

Adoption visibility across every team

See which teams are following governance standards and which are not. Track adoption by team, region, or business unit. Know whether new teams are onboarding well or cutting corners before bad habits become embedded practice.

Early warning on quality degradation

A team's governance score dropping is a signal, not a surprise. People and process analytics flag where adherence is slipping so you can intervene with coaching or training before unreliable results start reaching decisions.

Know where to focus support

Per-team and per-individual process adherence shows exactly where support is needed. A new team missing decision rules on every experiment needs a different intervention than a mature team that has started skipping sample size calculations. Allocate your time where it will have the most impact.

Consistent standards without policing

Governance gates enforce minimum standards so you do not have to. Required fields, approval workflows, and methodology checks mean teams meet the bar by default. Your role shifts from chasing compliance to enabling capability.

Scale without losing control

Adding teams, regions, or business units to the programme should not mean governance quality drops. Flywheel gives you the infrastructure to scale from one team to twenty with the same visibility, the same standards, and the same accountability at every level.

Prove the programme is working

Track governance maturity over time across the organisation. Show leadership that the programme is not just running experiments but systematically improving how evidence is generated, synthesised, and used. This is how you protect the budget and justify expansion.

Experimentation Specialists, Product Managers, UX Researchers, Insights Teams

One system instead of ten

No more maintaining experiment records across Notion, Airtable, Jira, Google Sheets, and Slack. One governed system handles the complete lifecycle from ideation through to business outcome.

Collision prevention that works

Automatic detection of experiments that overlap by audience, page, or feature. You find out before launch, not after contaminated results force a rerun and waste months of work.

Provable impact for your programme

When leadership questions the value of experimentation, Flywheel provides the evidence trail connecting your work to business decisions. Your programme's contribution is documented, not anecdotal.

Institutional memory that prevents rework

Search past experiments by outcome, objective, or hypothesis. Know what has been tested before and what was learned. Stop repeating experiments that were run two years ago by someone who has since left.

Structured ideation tied to strategy

Your experiment backlog maps to business objectives and decision owners. Prioritisation reflects strategic need, not just ease of execution. Every experiment has a reason to exist beyond "we had capacity."

AI synthesis with human verification

AI drafts insights and cross-study synthesis in minutes rather than days. You verify accuracy, add nuance, and certify findings for executive consumption. The quality stays with you; the speed comes from AI.